Over the past months, I’ve been noticing how casually AI image generation is being discussed. Since the release of newer models, the tone around it has shifted. It is often framed as something immediate, almost effortless, as if the act of producing images has been reduced to a simple input-output interaction. The idea that “anyone can do it now” comes up frequently, and on the surface, it seems to hold. The tools are accessible, the results are fast, and the volume of generated content has increased to the point where it feels constant.

What starts to feel off

At the same time, when I look at these images more closely, a different pattern starts to emerge. The issue is rarely obvious or technical in a direct way. It is not about whether an image renders correctly, but how it holds together. There are small inconsistencies that begin to accumulate. Light behaves slightly differently from one image to another, materials don’t fully resolve, proportions feel just off. More than that, there is often a lack of coherence across a series. Images exist individually, but they don’t seem to belong to the same visual world.

What becomes noticeable is not a limitation of the tools, but the absence of direction behind them. The images are generated, but they are not constructed.

What usually exists before an image

When I think about visual campaigns outside of AI, the image is never the starting point. There is always a layer that precedes it, even if it remains invisible in the final result. References, moodboards, locations, casting decisions, a sense of atmosphere and context. All of this defines the direction before anything is produced. The image is a consequence of that thinking, not the beginning of it.

With AI, that layer often feels compressed or skipped entirely. The process moves directly to output, and what gets lost is the structure that gives the visual its depth and coherence.

From prompting to constructing

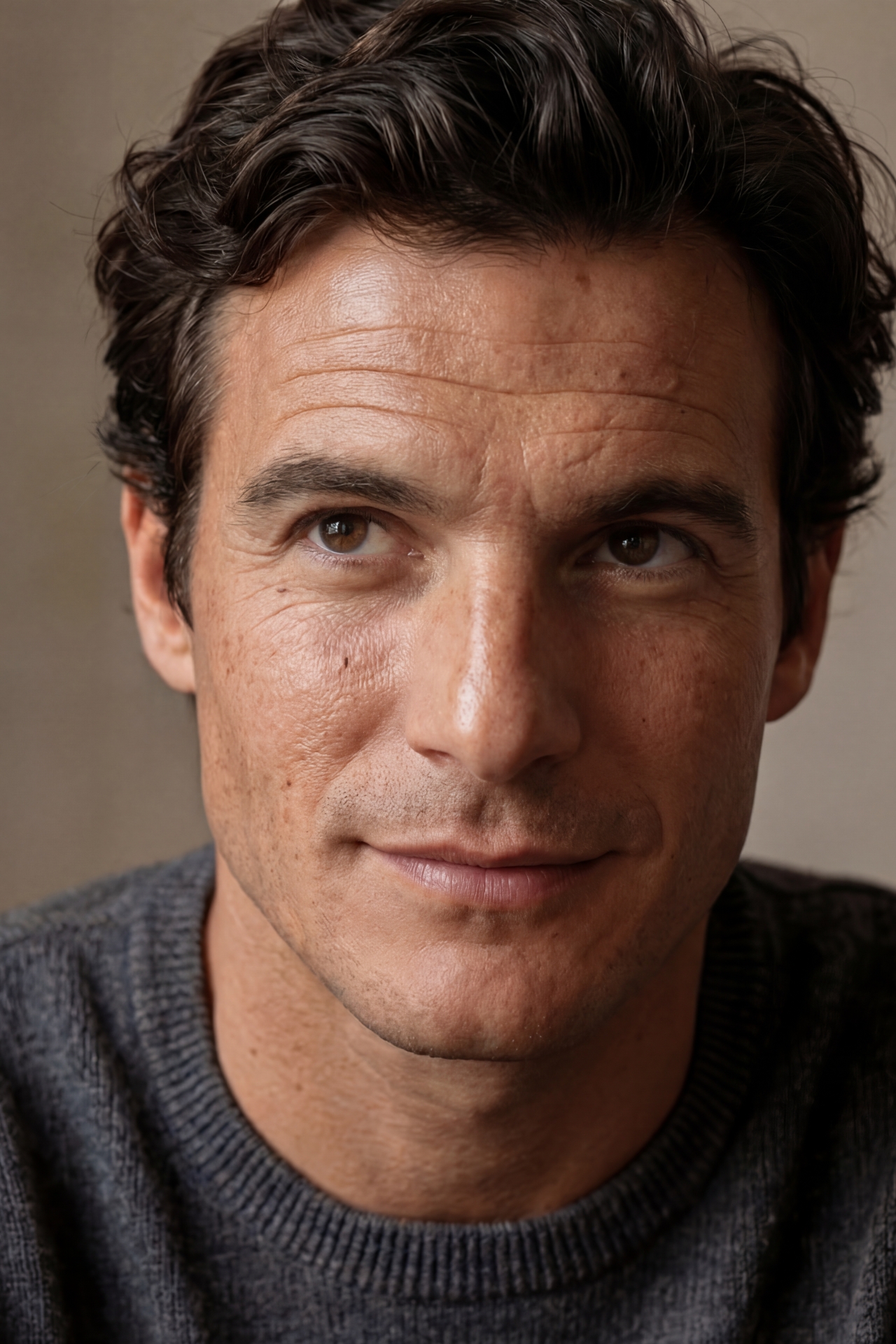

Spending more time working with these tools changed how I approach them. At the beginning, prompting felt technical, almost detached, as if the task was simply to find the right combination of words to unlock a specific result. But that approach quickly reached its limits. The more I tried to push towards something consistent and editorial, the more the process started to resemble something closer to a photoshoot.

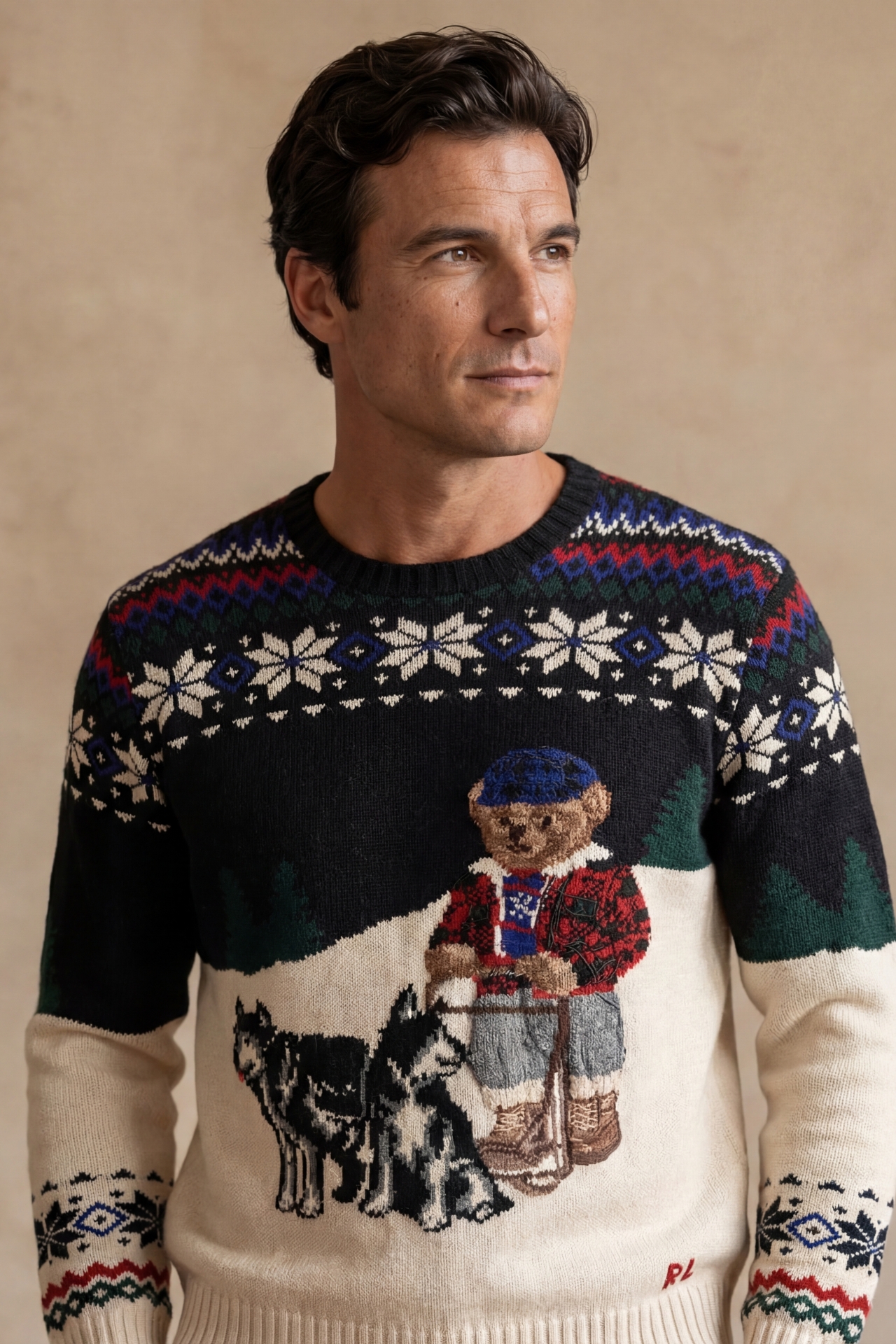

I found myself thinking in terms of scenes rather than prompts. What is happening in the image, who is present, what kind of environment this moment belongs to, how light would behave in that space. The focus shifted from generating a single image to constructing a visual system where each element relates to the others.

Consistency as the real constraint

Consistency became the central constraint. Not only within a single frame, but across multiple images. A character needs to remain recognisable, an environment needs to feel stable, objects need to belong to the same material world. Without this, the images start to feel interchangeable, even when they are visually different.

This is also where responsibility becomes more defined. It is easy to attribute imperfections to the system, to treat inconsistencies as a byproduct of the technology. But over time, that explanation feels less accurate. When something doesn’t work, it usually traces back to a lack of clarity in the direction. The tool executes what is given to it, but it does not decide what should be there in the first place.

Where the idea of “easy” breaks

Working towards something more editorial makes this even more visible. Emotion in these images is not something that can be added afterwards. It emerges from alignment, from how light, composition, materials and context relate to each other. Without that alignment, the result often feels flat, even if it is technically correct.

This is where the idea of ease starts to break down. Generating an image is simple. Constructing a visual narrative that holds together across multiple frames is not. As the volume of AI-generated content continues to grow, this difference becomes more apparent.

What remains visible

In a way, AI has made the role of direction more exposed. There is less room to rely on process or production constraints. What remains visible is the thinking behind the image. That part has not changed. It follows the same logic as any other form of visual work, where coherence, context and intention define the outcome.

The tools have evolved, but the underlying structure of how images come together remains the same. And when that structure is missing, it tends to show.